This text was initially revealed on Addy Osmani’s weblog. It’s being reposted right here with the creator’s permission.

Roughly: Anytime you discover an agent makes a mistake, you’re taking the time to engineer an answer such that the agent by no means makes that mistake once more.

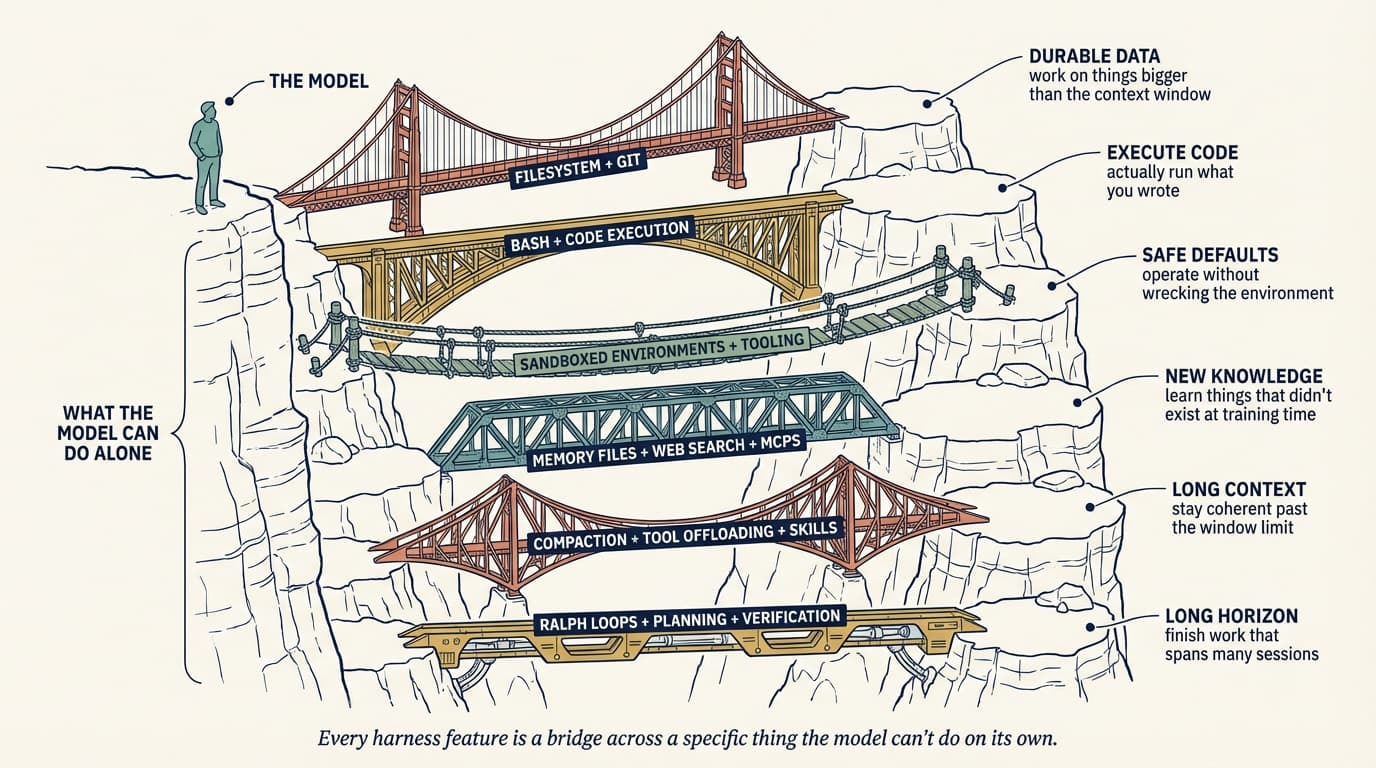

We’ve spent the final two years arguing about fashions. Which one is smartest, which one writes the cleanest React, which one hallucinates much less. That dialog is okay so far as it goes, nevertheless it’s lacking the opposite half of the system. The mannequin is one enter right into a operating agent. The remaining is the harness: the prompts, instruments, context insurance policies, hooks, sandboxes, subagents, suggestions loops, and restoration paths wrapped across the mannequin so it may possibly truly end one thing.

An honest mannequin with an incredible harness beats an incredible mannequin with a nasty harness. I’ve watched this play out by myself work time and again. And more and more the fascinating engineering isn’t in choosing the mannequin; it’s in designing the scaffolding round it.

That self-discipline now has a reputation. Viv Trivedy coined the time period harness engineering, and his “Anatomy of an Agent Harness” submit is the cleanest derivation of what a harness truly is and why each bit exists. Dex Horthy has been monitoring the sample because it emerges. HumanLayer frames most agent failures as “talent points” that come right down to configuration reasonably than mannequin weights. Anthropic’s engineering group has revealed what I believe is the most effective public breakdown of learn how to design a harness for long-running work. And Birgitta Böckeler has a very good overview of what this seems to be like from the person’s facet.

This submit is my try to tug these threads collectively.

What’s a harness, actually?

Viv’s one-liner does many of the work:

Agent = Mannequin + Harness. In the event you’re not the mannequin, you’re the harness.

A harness is every bit of code, configuration, and execution logic that isn’t the mannequin itself. A uncooked mannequin just isn’t an agent. It turns into one as soon as a harness offers it state, software execution, suggestions loops, and enforceable constraints.

Concretely, a harness consists of:

- System prompts, CLAUDE.md, AGENTS.md, talent information, and subagent prompts

- Instruments, expertise, MCP servers, and their descriptions

- Bundled infrastructure (filesystem, sandbox, browser)

- Orchestration logic (subagent spawning, handoffs, mannequin routing)

- Hooks and middleware for deterministic execution (compaction, continuation, lint checks)

- Observability (logs, traces, value and latency metering)

Simon Willison reduces the loop half to its essence: an agent is a system that “runs instruments in a loop to attain a purpose.” The talent is within the design of each the instruments and the loop.

If that feels like quite a lot of floor space, it’s. And it’s your floor space, not the mannequin supplier’s. Claude Code, Cursor, Codex, Aider, Cline: These are all harnesses. The mannequin beneath is typically the identical, however the habits you expertise is dominated by what the harness does.

coding agent = AI mannequin(s) + harness

This equation, articulated by Viv and echoed by HumanLayer, is the place the work truly lives. The controversy over the left-hand facet is loud. A lot of the precise leverage sits on the best.

The “talent challenge” reframe

There’s a sample I watch engineers fall into. The agent does one thing dumb, the engineer blames the mannequin, and the blame will get filed below “await the subsequent model.”

The harness-engineering mindset rejects that default. The failure is often legible. The agent didn’t learn about a conference, so that you add it to AGENTS.md. The agent ran a harmful command, so that you add a hook that blocks it. The agent acquired misplaced in a 40-step activity, so that you cut up it right into a planner and an executor. The agent saved “ending” damaged code, so that you wire a typecheck back-pressure sign into the loop.

HumanLayer says: “It’s not a mannequin downside. It’s a configuration downside.” Harness engineering is what occurs while you take that significantly.

There’s a placing information level that exhibits up in each Viv’s write-up and HumanLayer’s. On Terminal Bench 2.0, Claude Opus 4.6 operating inside Claude Code scores far decrease than the identical mannequin operating in a customized harness. Viv’s group moved a coding agent from Prime 30 to Prime 5 by altering solely the harness. Fashions get posttraining coupled to the harness they have been educated towards. Shifting them into a unique harness, with higher instruments in your codebase, a tighter immediate, and sharper backpressure, can unlock functionality the unique harness was leaving on the ground.

That is the other of the “simply await GPT-6” narrative. The hole between what in the present day’s fashions can do and what you see them doing is basically a harness hole.

The ratchet: Each mistake turns into a rule

A very powerful behavior in harness engineering is treating agent errors as everlasting alerts. Not one-off tales to snicker about, not “dangerous runs” to retry. Alerts.

If the agent ships a PR with a commented-out take a look at and I merge it accidentally, that’s an enter. The subsequent model of my AGENTS.md says “by no means remark out exams; delete them or repair them.” The subsequent model of my precommit hook greps for .skip( and xit( within the diff. The subsequent model of my reviewer subagent flags commented-out exams as a blocker.

You solely add constraints while you’ve seen an actual failure. You solely take away them when a succesful mannequin has made them redundant. Each line in a very good AGENTS.md needs to be traceable again to a particular factor that went mistaken.

That is additionally why harness engineering is a self-discipline reasonably than a framework. The proper harness in your codebase is formed by your failure historical past. You’ll be able to’t obtain it.

Working backward from habits

The framing from Viv that I discover most helpful once I’m truly designing a harness is to start out from the habits you need and derive the harness piece that delivers it. His sample: habits we wish (or need to repair) → harness design to assist the mannequin obtain this.

The helpful factor about deriving it this manner is that each harness part has a particular job. In the event you can’t title the habits a part exists to ship, it in all probability shouldn’t be there.

The remainder of this part walks the items in roughly the order Viv does, with the precise patterns I’ve discovered price stealing.

Filesystem and Git: Sturdy state

The filesystem is essentially the most foundational primitive, and it tends to be underrated as a result of it’s boring. Fashions can solely instantly function on what matches in context. With out a filesystem, you’re copy-pasting right into a chat window, and that isn’t a workflow.

Upon getting a filesystem, the agent will get a workspace to learn information, code, and docs; a spot to dump intermediate work as a substitute of holding it in context; and a floor the place a number of brokers and people can coordinate by means of shared information. Including Git on prime offers you versioning without spending a dime, so the agent can monitor progress, roll again errors, and department experiments.

A lot of the different harness primitives find yourself pointing on the filesystem for one thing.

Bash and code execution: The overall-purpose software

The principle agent loop in the present day is a ReAct loop: The mannequin causes, takes an motion through a software name, observes the outcome, and repeats. However a harness can solely execute the instruments it has logic for. You’ll be able to attempt to prebuild a software for each attainable motion, otherwise you can provide the agent bash and let it construct the instruments it wants on the fly.

Willison’s tackle that is that brokers already excel at shell instructions; most duties collapse to some well-chosen CLI invocations. Harnesses nonetheless ship centered instruments, however bash plus code execution has turn out to be the default general-purpose technique for autonomous downside fixing. It’s the distinction between instructing somebody to make use of a single kitchen gadget and handing them a kitchen.

Sandboxes and default tooling

Bash is barely helpful if it runs someplace secure. Working agent-generated code in your laptop computer is dangerous, and a single native surroundings doesn’t scale to many parallel brokers.

Sandboxes give brokers an remoted working surroundings. As an alternative of executing regionally, the harness connects to a sandbox to run code, examine information, set up dependencies, and confirm work. You’ll be able to allow-list instructions, implement community isolation, spin up new environments on demand, and tear them down when the duty is completed.

An excellent sandbox ships with good defaults: preinstalled language runtimes and packages, Git and take a look at CLIs, a headless browser for net interplay. Browsers, logs, screenshots, and take a look at runners are what let the agent observe its personal work and shut the self-verification loop.

The mannequin doesn’t configure its execution surroundings. Deciding the place the agent runs, what’s out there, and the way it verifies its output are all harness-level calls.

Reminiscence and search: Continuous studying

Fashions haven’t any extra data past their weights and what’s at present in context. With out the flexibility to edit weights, the one manner so as to add data is thru context injection.

The filesystem is once more the primitive. Harnesses help reminiscence file requirements like AGENTS.md that get injected on each begin. Because the agent edits that file, the harness reloads it, and data from one session carries into the subsequent. It is a crude however efficient type of continuous studying.

For data that didn’t exist at coaching time (new library variations, present docs, in the present day’s information), net search and MCP instruments like Context7 bridge the cutoff. These are helpful primitives to bake into the harness reasonably than leaving to the person.

Battling context rot

Context rot is the remark that fashions worsen at reasoning and finishing duties because the context window fills up. Context is scarce, and harnesses are largely supply mechanisms for good context engineering.

Three methods present up repeatedly:

Compaction. When the window will get near full, one thing has to offer. Letting the API error just isn’t an choice for a manufacturing harness, so the harness intelligently summarizes and offloads older context so the agent can preserve working.

Device-call offloading. Giant software outputs (assume 2,000-line log information) muddle context with out including a lot sign. The harness retains the pinnacle and tail tokens above a threshold and offloads the total output to the filesystem, the place the agent can learn it on demand.

Expertise with progressive disclosure. Loading each software and MCP into context at startup degrades efficiency earlier than the agent takes a single motion. Expertise let the harness reveal directions and instruments solely when the duty truly requires them.

Anthropic’s harness submit provides yet one more approach for the actually lengthy jobs: full context resets, the place the harness tears the session down and rebuilds it from a compact handoff file. They’re specific that compaction alone wasn’t ample for lengthy duties; typically you have to begin contemporary with a structured transient. That is nearer to how people onboard a brand new engineer than to how we often take into consideration “reminiscence.”

Lengthy-horizon execution: Ralph loops, planning, verification

Autonomous long-horizon work is the holy grail and the toughest factor to get proper. As we speak’s fashions endure from early stopping, poor decomposition of complicated issues, and incoherence as work stretches throughout a number of context home windows. The harness has to design round all of that.

I’ve written about autonomous coding loops just like the Ralph loop earlier than in self-improving brokers and in my 2026 tendencies piece, nevertheless it’s price restating on this framing: A hook intercepts the mannequin’s try and exit and reinjects the unique immediate right into a contemporary context window, forcing the agent to proceed towards a completion purpose. Every iteration begins clear however reads state from the earlier one by means of the filesystem. It’s a surprisingly easy trick for turning a single-session agent right into a multisession one, and it’s the sort of primitive you’d by no means derive from “simply use a wiser mannequin.”

Planning is when the mannequin decomposes a purpose right into a sequence of steps, often right into a plan file on disk. The harness helps this with prompting and reminders about learn how to use the plan file. After every step, the agent checks its work through self-verification: Hooks run a predefined take a look at suite and loop failures again to the mannequin with the error textual content, or the mannequin critiques its personal output towards specific standards.

Planner/generator/evaluator splits. Anthropic’s long-running harness work is specific that separating era from analysis into distinct brokers outperforms self-evaluation, as a result of brokers reliably skew optimistic when grading their very own work. It’s GANs for prose. The associated sample is the dash contract, the place the generator and evaluator negotiate what “executed” truly means earlier than code will get written. In my very own workflows, writing down the executed situation earlier than beginning has caught extra scope drift than any immediate change I’ve ever made.

Hooks: The enforcement layer

Hooks are what separate “I informed the agent to do X” from “the system enforces X.”

A hook is a script that runs at a particular lifecycle level: earlier than a software name, after a file edit, earlier than commit, on session begin. They’re the best place for issues the agent ought to always remember however typically does. Run typecheck and lint and exams after each edit and floor failures. Block harmful bash (rm -rf, git push --force, DROP TABLE). Require approval earlier than opening a PR or pushing to predominant. Auto-format on write so the agent doesn’t waste tokens on whitespace.

The precept HumanLayer highlights and I’ve come to agree with is: Success is silent; failures are verbose. If typecheck passes, the agent hears nothing. If it fails, the error textual content will get injected into the loop and the agent self-corrects. That makes the suggestions loop virtually free within the frequent case and instantly actionable when one thing goes mistaken.

AGENTS.md and gear alternative

The flat markdown rulebook on the root of your repo remains to be the one highest-leverage configuration level, as a result of it lands within the system immediate each flip. Conventions go right here: bundle supervisor, take a look at framework, formatting, “by no means contact /legacy,” “all the time use our logger.” Two hard-won classes:

Maintain it brief. HumanLayer retains theirs below 60 traces. Each line is competing for consideration, and extra guidelines make every rule matter much less. Pilot’s guidelines, not type information.

Earn every line. Guidelines ought to hint to a particular previous failure or a tough exterior constraint. In the event that they don’t, they’re noise. Ratchet; don’t brainstorm.

Similar self-discipline applies to instruments. Every software’s title, description, and schema will get stamped into the immediate each request. Ten centered instruments outperform fifty overlapping ones as a result of the mannequin can maintain the menu in its head. HumanLayer additionally flags an actual safety concern right here: software descriptions populate the immediate, so any MCP server you put in is trusted textual content the mannequin will learn. A sloppy or malicious MCP can prompt-inject your agent earlier than you’ve typed something.

What this seems to be like in manufacturing

The clearest public image I’ve seen of a mature harness is Fareed Khan’s (estimated) breakdown of Claude Code’s structure.

Virtually each idea from the earlier part exhibits up on this diagram as a named part. Context injection is the data layer. Loop state lives within the reminiscence retailer and the worktree isolator. Damaging-action hooks sit behind the permission gate. Subagent context firewalls are the whole multi-agent layer. The software dispatch registry is the place MCP servers and bash each plug in. Khan’s argument is similar as Viv’s, simply labored by means of a transport product: Claude Code’s trajectory is concerning the harness at the least as a lot as concerning the mannequin beneath it.

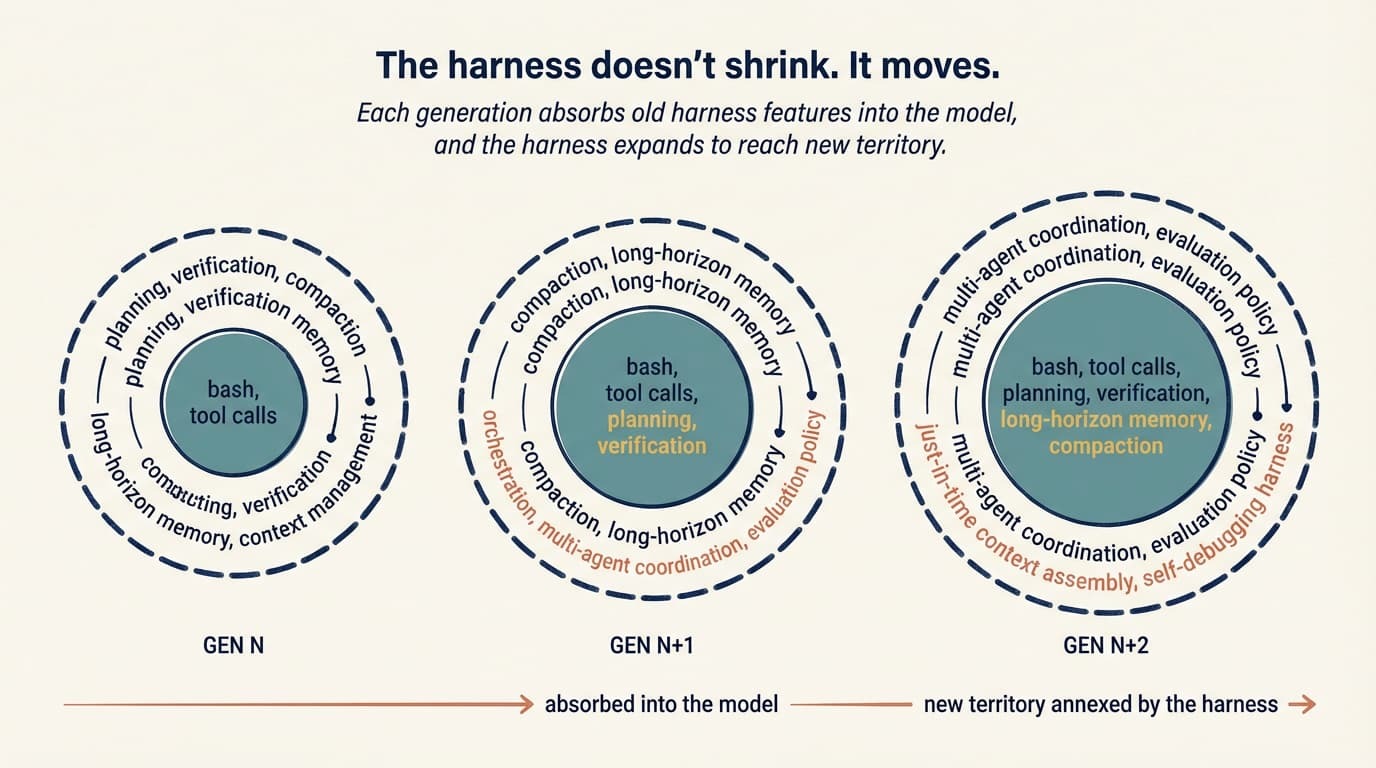

Harnesses don’t shrink; they transfer

One of many higher observations within the Anthropic write-up is that as fashions enhance, the area of fascinating harness mixtures doesn’t shrink. It strikes.

The naive story is that higher fashions make harnesses out of date. If the mannequin can plan, no planner. If the mannequin is coherent at lengthy horizons, no context resets. And sure, Opus 4.6 largely killed the context-anxiety failure mode (Sonnet 4.5 used to wrap up work prematurely because it approached what it thought was its context restrict), which suggests an entire class of anxiety-mitigation scaffolding I used to be writing six months in the past is now lifeless code.

However the ceiling moved with the mannequin. Duties that have been unreachable are in play, they usually have their very own failure modes. The anxiousness scaffolding goes away, and as a replacement you want a multiday reminiscence coverage or a harness that coordinates three specialised brokers or evaluators for design high quality in generated UIs. The assumptions shift, and so does the scaffolding that encodes them.

Anthropic places it cleanly: “Each part in a harness encodes an assumption about what the mannequin can’t do by itself.” When the mannequin will get higher at one thing, that part turns into load-bearing for nothing and may come out. When the mannequin unlocks one thing new, new scaffolding is required to achieve the brand new ceiling.

The model-harness coaching loop

The opposite factor that’s occurring, which Viv names explicitly, is a suggestions loop between harness design and mannequin coaching.

As we speak’s agent merchandise are posttrained with harnesses within the loop. The mannequin will get particularly higher on the actions the harness designers assume it needs to be good at: filesystem operations, bash, planning, subagent dispatch. That’s why Opus 4.6 feels totally different inside Claude Code than inside another person’s harness, and it’s why altering a software’s logic typically causes unusual regressions. A genuinely normal mannequin wouldn’t care whether or not you used apply_patch or str_replace, however cotraining creates overfitting.

The sensible implication is twofold. A harness is a dwelling system, not a config file you arrange as soon as. And the “finest” harness isn’t essentially the one the mannequin was educated inside; it’s the one designed in your activity. Viv’s Prime 30 to Prime 5 Terminal Bench soar is the clearest proof level I’ve seen.

Harness as a service

Viv’s different contribution is the HaaS framing: harness as a service. The remark is that we’re transferring from constructing on LLM APIs (which offer you a completion) to constructing on harness APIs (which offer you a runtime). The Claude Agent SDK, the Codex SDK, and the OpenAI Brokers SDK all level in the identical path. You get the loop, the instruments, the context administration, the hooks, and the sandbox primitives out of the field, and also you customise them.

The shift issues as a result of the default path was: construct your personal loop, wire up your personal tool-calling, deal with your personal dialog state, invent your personal approval circulate. Now the default path is: decide a harness framework, configure it alongside the 4 pillars (system immediate, instruments, context, subagents), and put the remainder of your effort into domain-specific immediate and gear design.

That’s what makes “talent challenge” tractable. You’re not rebuilding an agent from scratch each time one thing goes mistaken. You’re tuning a configuration floor that’s already well-factored.

Viv’s line on that is additionally the most effective argument for beginning messy: “Good agent constructing is an train in iteration. You’ll be able to’t do iterations for those who don’t have a v0.1.”

The place that is going

Have a look at the highest coding brokers facet by facet (Claude Code, Cursor, Codex, Aider, Cline) and they appear extra like one another than their underlying fashions do. The fashions are totally different. The harness patterns are converging. I don’t assume that’s an accident. It’s the trade slowly discovering the load-bearing items of scaffolding that flip a generative mannequin into one thing that may ship.

Viv’s framing of the open issues is the one I discover most fun: orchestrating many brokers working in parallel on a shared codebase; brokers that analyze their very own traces to establish and repair harness-level failure modes; harnesses that dynamically assemble the best instruments and context just-in-time for a given activity as a substitute of being preconfigured at startup.

That final one, particularly, seems like the place harnesses cease being static config and begin changing into one thing nearer to a compiler.