Human-in-the-Loop turns into an operational bottleneck

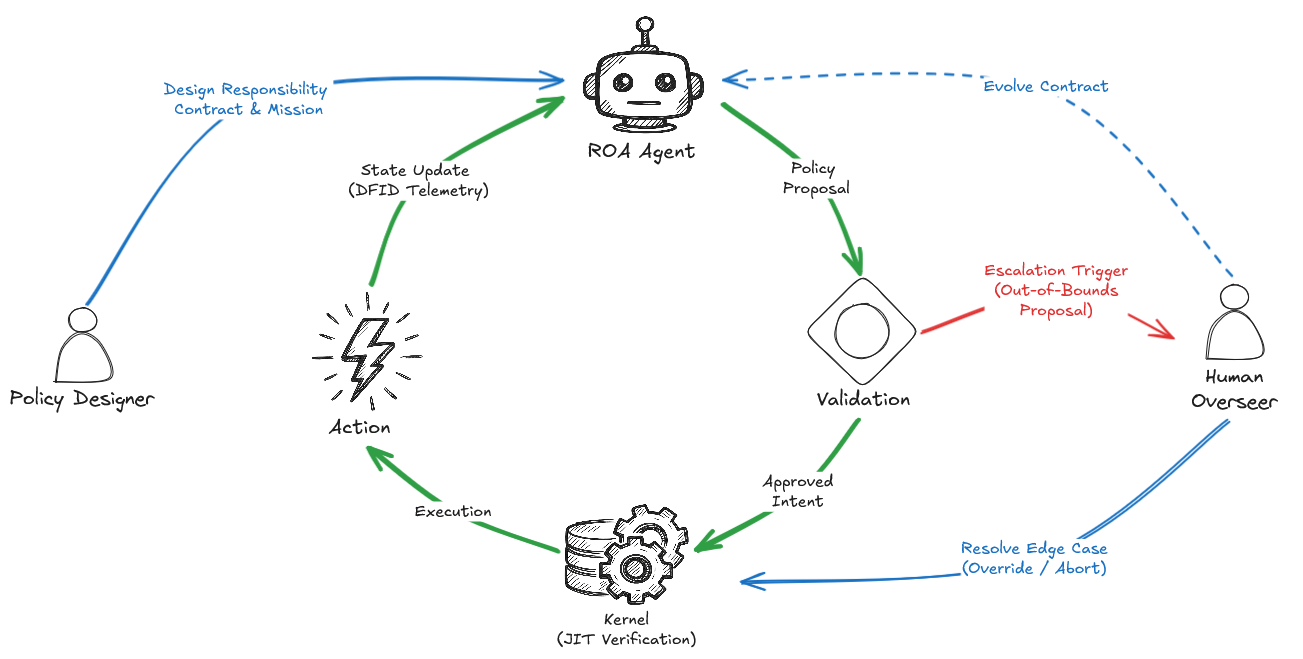

In my earlier article, ”The Lacking Layer in Agentic AI,” I argued that AI brokers want a deterministic execution kernel—a privileged “Kernel Area” that validates each proposed motion earlier than it touches the true world. That article targeted on what occurs on the execution boundary: idempotency, JIT state verification, and DFID-correlated telemetry. However establishing that boundary instantly raises a pure query: who precisely is crossing it, and below what authority?

The main focus right here is on a narrower and extra demanding class of programs. We aren’t taking a look at RAG chatbots, analysis copilots, or light-weight assistants that solely retrieve and summarize data. The goal is high-stakes agentic programs: programs allowed to mutate exterior state by transferring cash, altering infrastructure, or modifying important information. The method offered right here isn’t a general-purpose agent framework; it’s an enforcement sample for side-effectful programs.

Excessive-stakes AI programs have to be designed round tasks, not capabilities.

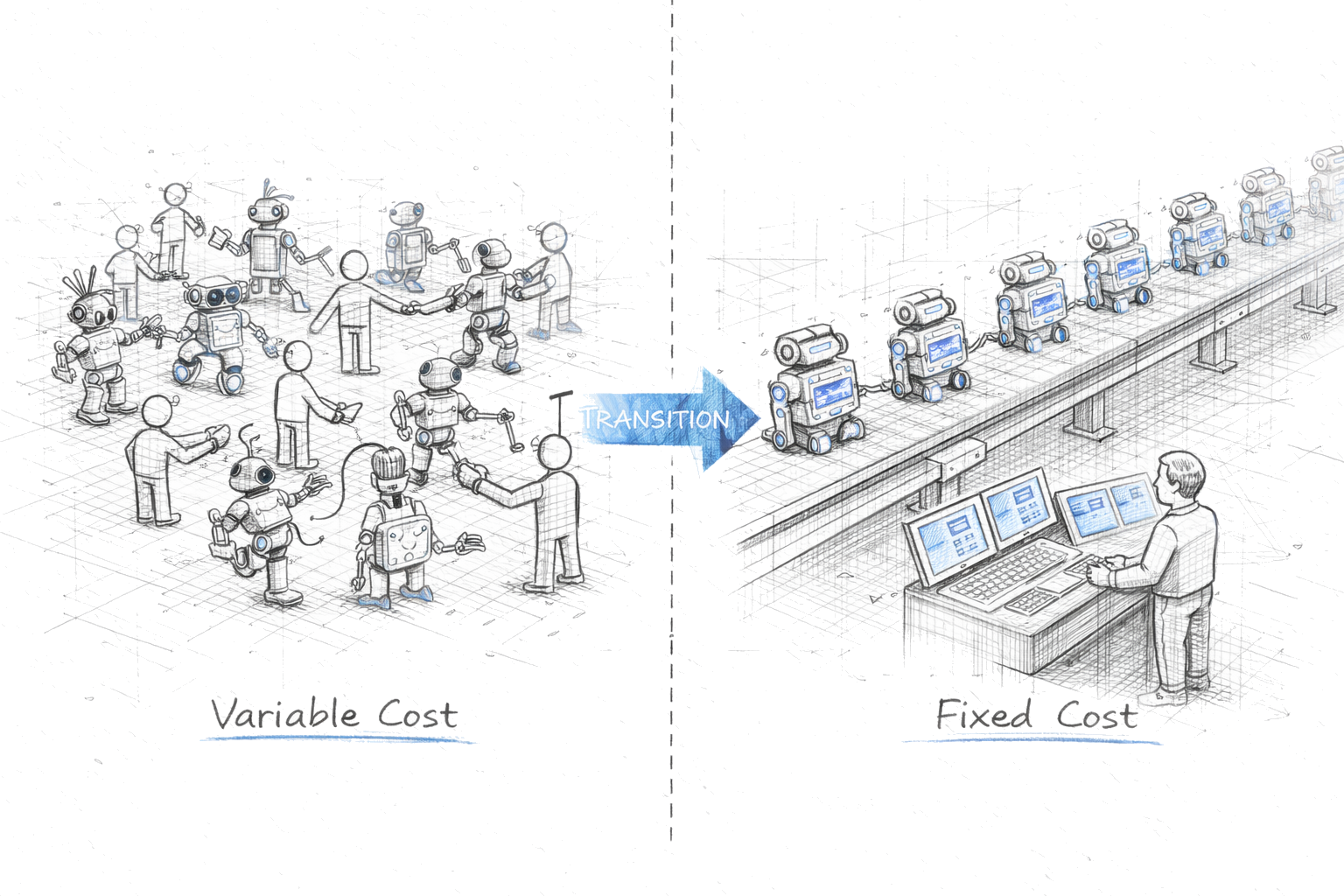

The trade’s present reply is unsatisfying: Human-in-the-Loop (HITL). In improvement environments and low-frequency pipelines, routing unsure choices to a human could be defensible. In manufacturing programs working at scale—dozens of brokers, lots of of choices per hour—it turns into the Scalability Lure.

Operationally, the failure is straightforward. An agent flags a call for evaluate. A human approves it. Then one other arrives, then dozens extra. The queue grows. The human begins clicking by way of. They cease studying the JSON payloads. They click on “Approve” as a result of the backlog is piling up, the assembly begins in ten minutes, and nothing has gone catastrophically incorrect but. That’s alert fatigue: governance degrades into guide throughput administration. The issue isn’t human weak spot; it’s governance-layer technical debt created by routing too many binary choices by way of a guide queue.

Tyler Akidau captured the broader challenge in “Posthuman: We All Constructed Brokers. No one Constructed HR.” echoing Tim O’Reilly’s name for the lacking protocols of the AI period: the trade has invested closely in agent functionality, however far much less within the infrastructure that governs authority, constraint, and accountability.

Scalable AI doesn’t imply hiring extra reviewers to oversee extra bots. It means altering the governance mannequin completely. The scalable various is Governance by Exception: People design coverage, the runtime enforces it, and solely really distinctive instances are escalated.

From capabilities to tasks—what a responsibility-oriented agent truly is

The dominant framing in enterprise AI asks a single query: What can this agent do? What instruments does it have? What APIs can it name? That is the capabilities body. It’s pure, it’s intuitive, and in manufacturing programs it’s the incorrect body completely.

In organizational design, a job is secure and assigned. Very similar to Position-based entry management (RBAC) in conventional software program, it defines what somebody is permitted to do, impartial of the duties they occur to be executing. We can not dictate how an individual thinks, however we will strictly sure what they’re permitted to do. A duty assertion makes that boundary specific. In software program, we by some means forgot this distinction, hoping that uncooked intelligence—higher fashions, tighter prompts, improved alignment—could be a adequate guardrail.

The distinction turns into clearer throughout some enterprise domains:

- Finance: A functionality is “can execute fairness trades.” A duty is “approved to execute as much as $50,000 per order, in extremely liquid equities solely, with a most every day drawdown of two%.”

- Healthcare Operations: A functionality is “can reschedule affected person appointments.” A duty is “approved to re-book non-critical outpatient visits inside a 14-day window, strictly avoiding specialist double-booking.”

- Provide Chain: A functionality is “can reroute freight.” A duty is “approved to redirect non-hazardous cargo as much as a most SLA penalty price range of $5,000.”

In programs the place brokers contact cash, medical information, or bodily logistics, the hole between these two statements is the hole between a demo and a manufacturing deployment.

The present paradigm usually handles this hole with prompts. Give the LLM an API key, inform it to “watch out with place sizing,” and hope alignment holds below adversarial inputs, uncommon market situations, and the seductive logic of edge instances. In low-risk contexts which may be tolerable. In high-stakes programs with real-world unwanted side effects, it isn’t a adequate management floor.

This distinction isn’t new. Distributed programs solved an identical downside a long time in the past.

Carl Hewitt’s Actor mannequin—launched in 1973—offers us a helpful basis. An Actor is an impartial computational entity with its personal state, its personal habits, and its personal messaging interface. Actors don’t share state. They impart solely by passing messages. Crucially, an Actor’s habits is bounded—outlined by what messages it accepts, not by an open-ended functionality set.

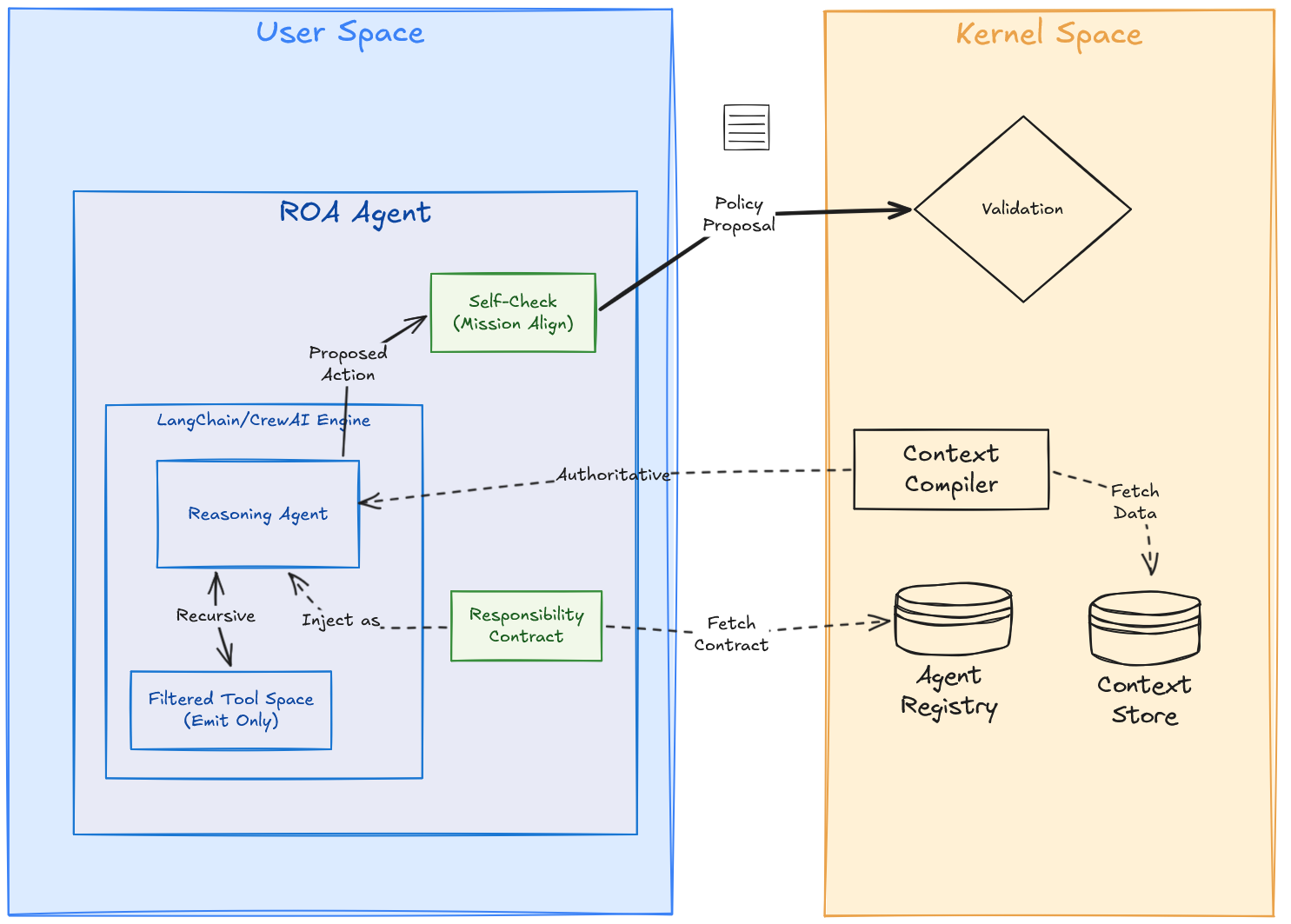

The Accountability-Oriented Agent (ROA) doesn’t invent a brand new distributed-systems primitive. As a substitute, it composes confirmed patterns—bounded actors, RBAC-style authority envelopes, audit trails, and execution-boundary validation—round an unpredictable LLM core. In reality, ROA is nearer to a choice actor than a full computational actor: It maintains its personal inside state however doesn’t immediately mutate the exterior world. Inside a secure position, a set mission, and a machine-enforceable contract, it receives enterprise occasions, causes over related context, and emits a PolicyProposal for the Runtime to validate.

Its job is epistemic, not government. It explains the scenario and buildings intent. However in contrast to conventional Actors, an ROA agent is outlined by strict separation of issues. In its reference kind, credentials reside exterior the agent’s attain. It opens no direct execution channel to exterior programs and writes no state by itself. An ROA agent might use instruments to collect context (read-only operations inside its sandbox, like querying a data base), however authority for state-mutating actions stays downstream of deterministic validation and execution gates. The one state-changing step attributable to the agent is emit_policy_proposal()—a structured, typed declare that it desires the system to do one thing. ROA shapes the type of intent; the Runtime decides whether or not that intent is allowed to grow to be motion.

This separation is the structure’s most vital property. 5 engineering pillars outline what it means in follow—every addressing a special failure mode on the reasoning–execution boundary—and collectively they remodel an LLM from a probabilistic instrument right into a governable, accountable system part.

To make this concrete, think about an underwriting agent on the London industrial market receiving a property submission. It reads the paperwork and produces an Clarify narrative. It then emits a PolicyProposal for a quote. However the property worth is £15M and its contract caps authority at £10M. The proposal reaches the Kernel, the place the Runtime evaluates the YAML contract deterministically, rejects execution, and transitions the circulate to ESCALATED. The senior underwriter is not reviewing each £2M submission. They’re pinged just for this particular £15M exception. That’s Human-Over-The-Loop in a single choice.

The engineering pillars of an ROA

Pillar 1: Accountability contract—authority encoded in code

If position defines the category of choices the agent might deal with, the Accountability Contract defines the onerous boundaries of that authority. The agent’s authority envelope isn’t a immediate. It’s a versioned, machine-readable contract registered with the Agent Registry—the Kernel’s single supply of reality for agent id. A key property applies right here: Prompts are recommendations. Code is enforcement. A immediate saying “don’t exceed $10,000 per commerce” could be creatively reinterpreted by a sufficiently motivated mannequin or overridden by a fastidiously crafted immediate injection. A contract area max_order_size_usd: 10000.0 validated by deterministic runtime code is materially more durable to bypass than a natural-language instruction. Within the reference structure, contracts are deployed out of band—brokers don’t self-register and don’t learn or modify their very own contract.

There’s a second-order consequence of this design that’s straightforward to miss: position definition robotically scopes the information context the agent requires. If an underwriting agent is contractually restricted to HOME_STD and HOME_PLUS coverage sorts within the LOW and MEDIUM danger tiers, the Context Compiler—which assembles the agent’s working snapshot earlier than every inference name—wants to produce solely the indicators related to these dimensions. Market knowledge for industrial property, flood zone statistics for excluded danger tiers, and regulatory knowledge for different product traces are merely not in scope. The context is deterministically narrowed by the contract.

This issues for a concrete LLM engineering purpose. In follow, fashions usually grow to be much less dependable as their working context expands, together with the category of results practitioners describe as Misplaced within the Center. A tightly scoped position isn’t just a governance comfort; it’s an architectural mechanism for maintaining the agent’s working context sufficiently small to purpose over reliably. A general-purpose agent handed an unconstrained context window of the whole lot presumably related is extra prone to degrade than a contract-bounded agent working in an outlined area.

Within the insurance coverage underwriting pattern, that Accountability Contract could possibly be configured like this:

brokers:

- agent_id: "underwriter_agent"

model: "1.0.0"

created_by: "compliance@instance.com"

created_at: "2025-02-17T10:00:00Z"

mission: |

You might be an insurance coverage underwriter. Analyze the consumer utility and suggest

a coverage. Base premium on Complete Insured Worth (TiV) at ~2% of TiV, capped at max_tiv.

NEVER suggest for Fireworks or CryptoMining industries - these are prohibited.

contract:

position: EXECUTOR

max_tiv: 3000000

prohibited_industries: ["Fireworks", "CryptoMining"]

escalate_on_uncertainty: 0.65

Pillar 2: Mission—The North Star

Mission is immutable at runtime. If the Accountability Contract defines what the agent might do, Mission defines what it’s making an attempt to optimize inside these boundaries. This distinction is operationally vital: the Contract defines the admissible motion area, whereas the Mission defines the rating logic inside that area. Contract solutions might; Mission solutions ought to. Two brokers can share the identical authority envelope and nonetheless optimize for various enterprise outcomes, so long as each stay inside the identical onerous boundary.

Within the ROA structure, Mission is a deployment artifact with two surfaces: a human-readable mission_statement utilized by the agent as a reasoning information, and a machine-verifiable mission_context_hash utilized by the Runtime to implement integrity.

mission_statement: "Reduce SLA penalties in logistics rerouting. Prioritize low-cost carriers."

mission_context_hash: "sha256:a3f9b2c1..." # Kernel-computed at deployment time, strictly immutableThe deterministic Kernel doesn’t interpret the mission_statement textual content. The agent makes use of that textual content internally as a reasoning information, whereas the Runtime enforces mission integrity by evaluating the mission_context_hash within the proposal with the immutable worth registered within the Agent Registry. If immediate injection or runtime drift adjustments the agent’s goal, the hash not matches and the proposal is rejected with out semantic interpretation. The hash is one implementation; the requirement is deterministic integrity on the boundary.

A Mission is outlined at deployment and evolves solely by way of a deliberate, version-controlled replace to the contract—not by way of immediate tweaking, consumer suggestions, or runtime negotiation. In sensible phrases, Mission retains optimization coverage below change management. An agent whose mission drifts with every dialog isn’t a sturdy manufacturing actor; it’s a session.

Pillar 3: Epistemic isolation—claims, not instructions (Clarify versus Coverage)

If Contract defines the boundary and Mission defines the target, Epistemic Isolation defines the one acceptable type of output. An ROA agent interacts with the world completely by way of structured, typed PolicyProposal artifacts. The agent’s output is an untrusted declare—an assertion that it desires the system to do one thing—and the Runtime treats it exactly as such.

This property is what makes the ROA + Runtime sample materially extra proof against immediate injection. If an injection bypasses the LLM’s reasoning guardrails, the corrupted output nonetheless arrives as a typed proposal carrying an agent_id. If the proposal asks to switch funds, however the agent’s contract lacks that authority, the Runtime rejects it with RBAC_DENIED. Safety derives from deterministic enforcement on the execution boundary, not from trusting LLM alignment.

To cleanly bridge probabilistic pondering to deterministic claims, ROA brokers produce choices by way of a structured inside workflow with a strict separation between Clarify and Coverage:

- Clarify: Agent interprets context and articulates the scenario in pure language (e.g., “

Flood danger rating 3/10...“). This creates a story artifact for human auditors. It’s by no means parsed for execution logic. - Coverage: Agent formulates a structured

PolicyProposalcarrying the execution-relevant fields the Runtime can validate deterministically. Within the underwriting pattern, that appears like this:

proposal = PolicyProposal(

total_insured_value=2_750_000,

premium=55_000,

trade="Business Property",

justification="TiV stays beneath delegated max_tiv and no prohibited trade indicators have been discovered.",

confidence=0.81,

)The binding fields (total_insured_value, premium, trade) drive deterministic validation, whereas justification and confidence stay observability metadata for audit and escalation.

That separation is what makes the proof mannequin clear: The narrative stays human-readable, the coverage stays machine-enforceable, and each could be sure to the identical choice lineage with out permitting free textual content to leak into execution.

Pillar 4: Epistemic longevity—reminiscence throughout choice cycles

As soon as the agent has a secure position, a set mission, and a disciplined output interface, continuity throughout choice cycles turns into significant. That is the pillar most absent from sensible implementations—and the one most chargeable for a selected class of manufacturing failures: the infinite rejection loop.

ROA brokers are not stateless inference calls. They’re long-lived entities that keep a choice trajectory throughout a number of cycles—a Kernel-managed file of prior proposals, their validation outcomes, and the enterprise penalties of these choices.

The identical scoping logic that constrains authority additionally determines whether or not reminiscence is significant. An extended-lived agent working inside a secure position accumulates historical past from the identical class of choices below comparable constraints—previous actions and their outcomes are genuinely causally associated. A general-purpose assistant handed unrelated duties should discover patterns, however these correlations are hardly ever operationally dependable. Targeted duty is what separates sign from coincidence within the agent’s reminiscence.

The failure mode this prevents has a reputation: choice amnesia. With out longevity, the agent repeats the identical rejected intent as a result of the rejection isn’t a part of the subsequent choice cycle.

Pillar 5: Resolution telemetry—immutable accountability

Each PolicyProposal carries a Resolution Circulate ID (dfid) that binds it to the total choice context. Reasonably than dumping unstructured logs, this constructs a reconstruction primitive—a relational hint connecting:

- The Enter: The precise Context Snapshot (T0) the agent reasoned in opposition to.

- The Validation: The end result evaluated in opposition to the Accountability Contract.

- The End result: The ultimate execution receipt.

This correlated file permits answering “why did this agent do that, at this particular second, in opposition to what state of the world?” utilizing a regular SQL be part of throughout the total choice lifecycle. In higher-assurance deployments, the identical structured telemetry could be wrapped right into a cryptographically signed proof-carrying intent, permitting impartial verification of the choice artifact with out asking anybody to belief mutable textual content logs—precisely the course high-risk compliance regimes such because the EU AI Act are pushing towards.

However structured choice telemetry does greater than help every day postmortems. Each choice turns into a structured relational file sure by DFID—the identical basis that makes macroscopic failures like Agent Drift detectable earlier than they compound silently throughout the fleet.

Human-Over-The-Loop—autonomy at scale

The choice to Human-in-the-Loop is to not take away the human, however to maneuver the human from the execution loop to the design loop.

That is the Human-Over-The-Loop (HOTL) mannequin. The human acts as a Coverage Designer who defines and evolves the contract that governs choices, whereas the system operates autonomously inside these boundaries. No approval queue. No evaluate fatigue. Governance by Exception is the scalable mannequin.

Escalation Triggers. The system escalates solely when the agent encounters a scenario its contract doesn’t authorize it to resolve alone:

- Proposed motion exceeds a contract authority restrict

- Agent confidence drops beneath

escalate_on_uncertaintythreshold - Exterior API errors exceed a retry price range

- No choice has been emitted inside a configured inactivity window

When a set off fires, the DecisionFlow enters ESCALATED state. The operator sees the WorkingContext, the PolicyProposal, and the explanation for escalation, and may OVERRIDE, MODIFY, or ABORT. This isn’t an “Approve / Reject” queue; it’s focused intervention.

Escalation shouldn’t be understood as proof that the agent reliably is aware of what it doesn’t know. LLMs are poor judges of their very own uncertainty, so the structure doesn’t belief introspection. The escalate_on_uncertainty threshold is a helpful heuristic, not a floor reality: the system forces escalation when declared confidence falls beneath the edge, or when the proposal violates contract parameters the Kernel can consider deterministically. If the mannequin produces a foul proposal with excessive confidence, the Runtime nonetheless blocks it. The agent might sign uncertainty; the Runtime decides whether or not that uncertainty issues.

Frozen Context + JIT. The operator critiques the proposal in opposition to the precise snapshot of the world the agent noticed at T0, avoiding the TOCTOU (Time-of-Examine to Time-of-Use) downside: The human audits the machine’s choice utilizing precisely the information the machine noticed.

However the world retains transferring. Hitting “OVERRIDE” at T1 doesn’t blindly execute the motion; it forces the proposal by way of the Runtime’s JIT (Simply-In-Time) Verification gate. If actuality has drifted past the contract’s Drift Envelope between T0 and T1 , the Runtime rejects the override fairly than executing a once-valid intent in opposition to stale state.

Contract Evolution. The proper long-term response to a official edge case is normally not repeated override, however contract change. If enterprise actuality shifts, the operator updates the Accountability Contract and deploys a brand new model. The system adapts by way of version-controlled governance boundaries fairly than immediate edits or fine-tuning.

Escalation Finances. Escalation is rate-limited by a token bucket per agent (for instance, 3 escalations per hour). If an agent exhausts that price range, the Runtime transitions it to SUSPENDED, information the state change, and blocks new DecisionFlows till an operator intervenes. This prevents Escalation DDoS and incorporates runaway reasoning prices.

Confidence ≠ Authority. An agent might emit a proposal with confidence=0.99, and if that proposal exceeds contract authority, the Runtime rejects it. Self-assessed certainty isn’t permission.

Wrapping, not changing: The position of current frameworks

Adopting the ROA sample doesn’t imply discarding the instruments your engineering groups have spent the final 12 months mastering. Frameworks like LangChain, AutoGen, and CrewAI excel at orchestrating advanced reasoning loops, RAG pipelines, and gear use. ROA isn’t designed to compete with them; it’s designed to control them.

In follow, you may take a mature LangChain agent and wrap it inside an ROA boundary. The underlying framework nonetheless handles the probabilistic reasoning (Person Area orchestration). The architectural shift is straightforward however consequential: you filter the framework’s instrument area. You bodily take away change.execute_trade() or db.drop_table() from the LangChain agent’s toolbox. As a substitute, you present it with a single, sandboxed instrument: emit_policy_proposal(). The agent causes, iterates, and finally calls that instrument to emit its ultimate intent. The ROA wrapper catches this declare, might carry out an area self-check as a noise-reduction heuristic, and forwards the PolicyProposal throughout the boundary to the Kernel Area for precise enforcement. You retain the ability of the framework, however you acquire deterministic execution governance the place it issues.

Prices and trade-offs

ROA isn’t free. It introduces engineering overhead exactly as a result of it replaces casual belief with specific governance.

- Validation gates and JIT checks add latency to each side-effectful choice.

- Accountability Contracts add design overhead: authorship, versioning, possession, and evaluate now should be specific.

- DFID-linked auditability provides storage, tracing, and operational integration work.

- Escalation thresholds and budgets require area tuning; unhealthy defaults both flood operators or disguise official exceptions.

These prices are justified solely when the draw back of an incorrect aspect impact is materially greater than the price of controlling it. For RAG chatbots and low-risk assistants, this structure is usually extreme. For prime-stakes programs, it’s the price of constructing an actual boundary.

Conclusion: Structure, not alchemy

5 pillars. One architectural dedication: an agent that can’t be trusted to control itself should function inside a system that governs it as a substitute. The Accountability Contract bounds authority. The Mission locks the target. Epistemic Isolation ensures output is a declare, not a command. Longevity prevents the system from forgetting what it already realized. Audit makes each choice reconstructable. The ROA sample—a Accountability Contract as a substitute of a functionality checklist, Claims as a substitute of Instructions, a deterministic kernel as a substitute of an off-the-cuff immediate—composes these right into a single enforceable boundary. Intent is structured by the agent. Boundaries are enforced by the contract. Telemetry is collected by DFID. The Human-Over-The-Loop mannequin reserves human judgment for real exceptions, not approval queues. Collectively, they remodel a probabilistic mannequin right into a governable manufacturing actor.

As soon as deterministic execution boundaries and DFID-linked telemetry are in place, a special class of day-three questions turns into attainable: Which brokers keep inside limits but quietly destroy margin? Which choice patterns justify computerized suspension earlier than people discover the drift? How will we reconstruct any motion to regulatory commonplace, govern a fleet the place brokers carry completely different danger profiles and choice weights?

Accountability is the lacking execution-governance layer—and it belongs within the structure, not the system immediate.

The period of AI demos is ending. The period of AI manufacturing programs is starting. These programs is not going to be distinguished solely by the intelligence of their fashions. They will even be distinguished by the rigor of their governance.

This text offers a high-level introduction to the Accountability-Oriented Brokers and Resolution Intelligence Runtime and its method to manufacturing resiliency and operational challenges. The total DIR specification, ROA contract schemas, reference implementations can be found as an open supply challenge at GitHub.